Course

Chapter 4: How To Use Google’s Meridian

Table of contents

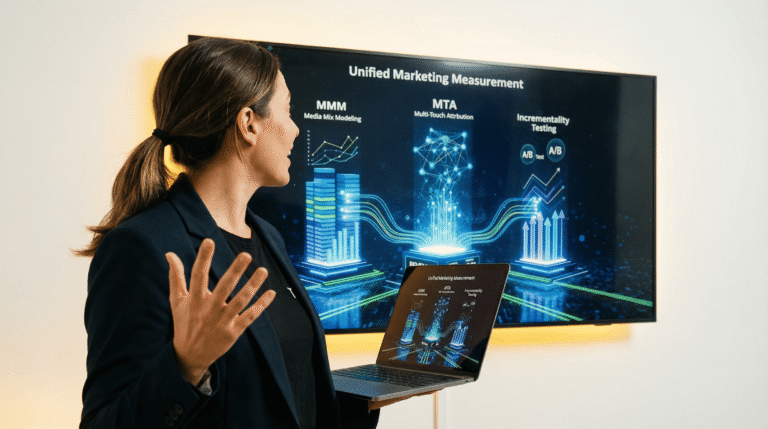

Meridian is a comprehensive Bayesian hierarchical framework designed to tackle one of the classic challenges in observational data science: estimating causal effects from complex, correlated time-series data. For a data scientist, its appeal lies not just in the answers it provides but in the statistical rigor and sophistication of its methods.

The Philosophical Core: Bayesian Causal Inference

At its foundation, Meridian operates on a philosophy of probabilistic causal inference. It implicitly encourages the data scientist to think in terms of causal graphs (specifically, Directed Acyclic Graphs or DAGs). The model’s structure is an attempt to codify the assumed relationships between marketing channels, external factors (like seasonality and competitor activity), consumer behavior (like organic search), and the final business outcomes. The goal is to statistically “block the back-door paths” by controlling for confounders, thereby isolating the direct causal path from an advertising channel to the target variable.

This is where the Bayesian MMM approach becomes essential. Unlike frequentist methods that yield single-point estimates, Meridian treats every parameter—from a simple intercept to a complex media effect coefficient—as a probability distribution. This is a profound shift. It allows the model to express uncertainty directly. Instead of a single ROI value of 2.5, the model might conclude, “There is a 95% probability that the ROI for this channel lies between 1.8 and 3.2.” This provides a native, built-in quantification of confidence that is invaluable for decision-making.

What is the Bayesian approach made of?

The Bayesian approach revolves around three components:

- Prior Distributions: This is where the data scientist’s domain expertise is encoded. Before seeing the data, you can set priors on parameters. For instance, based on prior experiments, you might set a prior on an ROI parameter that suggests it’s unlikely to be negative or above 10. This helps regularize the model and guide it towards more plausible solutions, especially with noisy data.

- Likelihood Function: This is the heart of the statistical model, defining the assumed data-generating process. For example, using a Normal likelihood assumes your residuals are normally distributed, while a Negative Binomial likelihood is more appropriate for overdispersed count data (like daily conversions), which is a common scenario.

- Posterior Distribution: The result of combining the prior with the data via the likelihood. This posterior distribution is the “answer”—a full probability distribution for every parameter in the model, representing our updated belief after observing the data.

Our Editorial Standards

Reviewed for Accuracy

Every piece is fact-checked for precision.

Up-to-Date Research

We reflect the latest trends and insights.

Credible References

Backed by trusted industry sources.

Actionable & Insight-Driven

Strategic takeaways for real results.